A/B Testing Velocity: Accelerating Shopify Growth

Running an online store on Shopify can feel like a constant race to keep up. Every delay in testing new ideas is a missed opportunity for revenue and growth. That is why A/B testing velocity matters so much. The faster you experiment and learn, the quicker you find what actually increases conversions and sales. This article breaks down how you can harness fast, AI-driven testing without technical headaches, so your store keeps moving ahead of the competition.

Table of Contents

- A/B Testing Velocity Defined For Shopify

- Types And Approaches To Test Velocity

- Choosing The Right Approach For Your Store

- How Velocity Impacts Store Performance

- The Competitive Advantage Factor

- Key Factors Influencing Test Speed

- The Traffic Factor

- Risks And Mistakes Slowing Velocity

- Operational Infrastructure Failures

Key Takeaways

| Point | Details |

|---|---|

| A/B Testing Velocity | High testing velocity allows Shopify store owners to run experiments consistently, leading to quicker insights and revenue growth. |

| Critical Factors for Success | Pre-tested variations, quick deployment without coding, and clear data are essential for maximizing A/B testing velocity. |

| Impact on Performance | A higher testing velocity correlates with increased conversion rates and improved revenue, compressing decision-making time. |

| Avoiding Common Pitfalls | Misuse of statistical methods and technical failures can hinder testing velocity; proper setup and monitoring are crucial for valid results. |

A/B Testing Velocity Defined for Shopify

A/B testing velocity represents the speed and efficiency with which you run experiments on your Shopify store and extract actionable insights from them. At its core, A/B testing is a method of experimentation where two versions of a variable are compared to determine which performs better at optimizing outcomes like clicks or conversions. For Shopify store owners, velocity goes beyond just running tests randomly. It’s about creating a systematic rhythm of testing, learning, and iterating that compounds growth over time. Rather than conducting one test every quarter and hoping for results, velocity means deploying meaningful experiments consistently throughout the month, analyzing data quickly, and applying winning variations to drive immediate revenue gains.

What separates high-velocity testing from slow, ineffective testing is the ability to eliminate friction from your experimentation process. Traditional approaches require technical expertise, custom development, or hiring consultants to set up each test. This creates bottlenecks that slow everything down. When you’re waiting weeks for a developer to code a test, you’ve already lost momentum and market opportunity. Online controlled experiments provide causal impact measurement that reveals which changes actually drive conversions versus which are just noise. The difference with velocity is that these experiments happen rapidly and repeatedly, allowing you to accumulate small wins that build into substantial growth. A test deployed this week might show a 2% conversion lift. But when you run 10 tests per month with similar results, you’re looking at compounding improvements that transform your annual revenue.

For Shopify stores specifically, velocity depends on three critical factors. First, you need pre-tested variations that actually work, not generic hypotheses that might fail. Second, you need the ability to deploy tests without writing code or waiting for technical resources. Third, you need data that’s clear enough to act on immediately, without statistical ambiguity. When these three elements align, your store shifts from static to dynamic. You stop asking “what should we test next?” and instead start asking “which proven variation should we deploy first?” This mindset change accelerates everything because you’re making decisions based on certainty, not guesswork.

Pro tip: Start with one test per week rather than trying to run five simultaneously; focus on deploying high-impact variations quickly and let each result inform your next move.

Types and Approaches to Test Velocity

Not all testing approaches move at the same speed. Some methods waste time gathering unnecessary data, while others make decisions faster without sacrificing accuracy. Understanding the different approaches to testing helps you choose the right method for your Shopify store’s situation. The most basic approach is the simple A/B split, where you divide traffic equally between two variations and wait for a predetermined sample size before declaring a winner. This works, but it’s slow. You’re locked into waiting whether the results are obvious after day three or ambiguous after three weeks. A faster alternative is the multi-armed bandit approach, which dynamically adjusts traffic allocation to better performing variants. Instead of splitting traffic 50/50 the entire time, the system learns as data comes in and gradually sends more visitors to the winning variation. This means you stop wasting traffic on underperforming options and accelerate your path to meaningful results.

For stores running multiple tests simultaneously, simultaneous multiple variant testing lets you evaluate several variations at once rather than running them sequentially. Instead of testing variation A against the control, then testing variation B against the control, then variation C, you test all three against the control in a single experiment. This cuts your timeline dramatically because you’re not waiting months to run each test one at a time. Another velocity amplifier is sequential testing with anytime-valid inference, which represents a fundamental shift in how statistical analysis works. Sequential analysis allows continuous monitoring of experiment data without sacrificing statistical rigor, meaning you can peek at results daily and make decisions the moment statistical confidence reaches your threshold, rather than waiting for a fixed end date. Traditional fixed-sample testing forces you to wait even if results are crystal clear after half the projected time.

Here’s a comparison of different Shopify A/B testing methodologies and their impact on test velocity:

| Testing Method | Speed of Results | Data Efficiency | Suitable Store Size |

|---|---|---|---|

| Simple A/B Split | Slow | Basic | Small or new stores |

| Multi-Armed Bandit | Fast | Adaptive, efficient | Medium to high traffic |

| Simultaneous Variants | Moderate | Multiple insights | Medium to large stores |

| Sequential Testing | Very fast | Continuous analysis | High-volume, mature stores |

Choosing the Right Approach for Your Store

Your choice depends on what matters most. If you’re new to testing and want to build confidence in your process, start with simple A/B splits. They’re easier to understand and explain to stakeholders. If you’re running multiple tests monthly and want to accelerate results, multi-armed bandits and sequential testing compress timelines significantly. Many high-velocity Shopify stores use a hybrid approach: simple A/B tests for straightforward decisions and multi-armed bandits when they want to optimize traffic allocation in real-time. The key is matching your methodology to your volume and tolerance for complexity.

Pro tip: If you’re running fewer than three tests per month, stick with simple A/B splits; if you’re running five or more, invest time in understanding sequential testing methods to reclaim weeks of lost optimization time.

How Velocity Impacts Store Performance

Testing velocity is not just about moving fast for the sake of it. Higher testing velocity directly translates into measurable business outcomes. When you run experiments frequently and iterate based on results, you’re essentially compressing months of guesswork into weeks of data-driven decisions. Fast experimentation velocity improves store performance by increasing organizational learning and enabling e-commerce stores to identify effective strategies while failing fast on poor ideas. This shortens your feedback loop dramatically. Instead of launching a new product feature, waiting three months for sales data, and then discovering it didn’t work, you test variations within days, validate assumptions quickly, and either refine or pivot. Your store learns faster. Your team makes better decisions. Your revenue responds accordingly.

The connection between velocity and revenue is direct and measurable. A/B testing velocity correlates with increased user engagement, higher conversion rates, and revenue gains for e-commerce platforms. Faster testing cycles reduce decision latency, allowing your store to refine the user experience and optimize conversion funnels rapidly. Think about it this way. Every day you leave a conversion bottleneck in place is a day you’re leaving money on the table. A slow testing process means that bottleneck persists for weeks. A fast testing process means you identify it, test a solution, measure the impact, and deploy a winner within days. When you multiply that across 10, 20, or 50 tests throughout the year, the cumulative effect on your bottom line becomes substantial. Stores running multiple tests monthly see revenue improvements that compound throughout the year.

The Competitive Advantage Factor

Beyond immediate revenue gains, velocity builds organizational advantage. Your competitors are probably running one or two tests per month. You’re running five or ten. Over the course of a year, that’s 36 to 96 additional experiments you’ve completed compared to them. Each test generates learning. Each learning either removes friction from your customer journey or identifies a new revenue opportunity. After 12 months of this difference, you don’t just have better conversion rates. You have fundamentally optimized your store in ways your competitors haven’t discovered yet. This becomes your durable competitive moat. Faster learning organizations outpace slower ones consistently.

Pro tip: Track your test velocity monthly as a business metric, not just your conversion rate; when you consistently run 3 or more tests per month, you’ll see compounding improvements within 90 days.

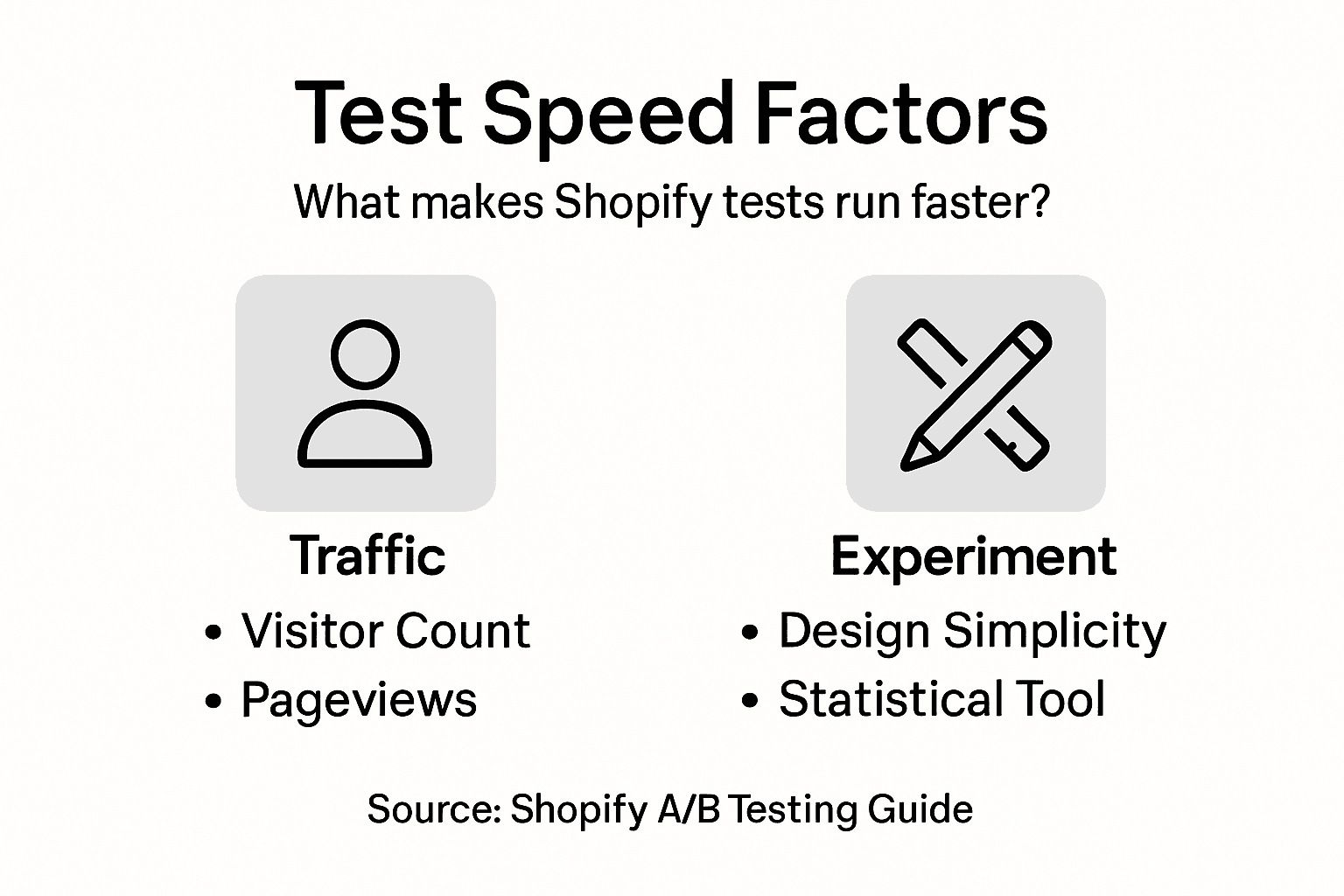

Key Factors Influencing Test Speed

Several interconnected factors determine how quickly you can move through your testing cycle. Understanding these allows you to optimize the ones you control and work strategically around the ones you cannot. Sample size is perhaps the most obvious factor. Larger sample sizes provide statistical confidence but require more time and traffic to accumulate. Smaller sample sizes let you finish tests faster but risk statistical uncertainty. The balance between these creates tension that every store owner must navigate. Related to sample size is traffic allocation, which determines how many visitors see each variation. When you split traffic evenly between control and variant, you build sample size slowly. When you use adaptive allocation of traffic to favor better performers, you reach statistical confidence faster because fewer visitors are wasted on losing variations. This mathematical optimization can reduce test length by up to 50 percent, which is a dramatic acceleration for stores running multiple experiments monthly.

Experiment design complexity also matters significantly. A simple test with one variable and two versions (control and variant) completes faster than a complex multivariate test with multiple variables and dozens of potential combinations. Simple tests answer straightforward questions: Does this button color drive more clicks? Does this headline increase add-to-cart rates? Complex tests require more traffic and time to gather reliable data across all combinations. For high-velocity testing, you want to keep most experiments simple and reserve multivariate testing for specific situations. Additionally, key factors influencing test speed include statistical methodology innovations such as multi-armed bandits and interference-free test designs. These modern approaches improve velocity by enhancing efficiency and reducing bias compared to traditional fixed-sample testing.

To better understand key factors influencing A/B testing velocity and business impact, refer to this summary:

| Factor | Role in Velocity | Business Benefit |

|---|---|---|

| Sample Size | Controls test duration | Ensures accuracy |

| Traffic Allocation | Speeds confidence | Reduces wasted traffic |

| Experiment Complexity | Affects timeline | Enables focused learning |

| Statistical Innovation | Improves efficiency | Minimizes bias and error |

The Traffic Factor

Your store’s daily traffic is perhaps the single largest constraint on testing speed. A Shopify store receiving 10,000 daily visitors can accumulate sample size much faster than a store receiving 1,000 daily visitors. If your store is lower-traffic, you have two options. First, you can run longer tests to gather sufficient data. Second, you can focus on high-impact variations that show dramatic improvement, which requires smaller sample sizes to reach confidence. Many successful lower-traffic stores use a combination approach: they test fewer variations simultaneously but run more tests throughout the year. Higher-traffic stores can test multiple variations in parallel because they accumulate sample size quickly.

Pro tip: Focus your early tests on variations with expected 10 percent or higher uplift potential; when a variation shows dramatic improvement, you reach statistical confidence faster, allowing you to deploy winners and run additional tests within the same timeframe.

Risks and Mistakes Slowing Velocity

Not all testing programs move at high velocity. Many slow down because of preventable mistakes and overlooked risks that compound over time. One critical risk is statistical misuse. Store owners often peek at results too early, declare winners prematurely, or run tests without sufficient sample size. These shortcuts feel like they save time, but they backfire. You deploy a variation that looked promising after one week but fails after the full two weeks of data. Now you’ve wasted resources and lost customer trust. Worse, you might have introduced a change that actually hurts conversions. Common mistakes that reduce testing velocity include misuse of statistical methods leading to false positives, selecting incorrect metrics, and operational challenges such as bugs in experimental infrastructure. These errors prolong experiments and decrease the reliability of insights, limiting growth acceleration. A false positive means you deploy a loser, which stalls your momentum.

Another significant risk is metric selection error. You run a test tracking the wrong metric and reach false conclusions. For example, you test a new checkout flow and track page views rather than actual conversions. Page views might increase while conversions decrease, yet you declare the test a winner based on the wrong metric. Now you’ve deployed a change that hurts revenue while looking like a success. This cascades into future testing decisions based on flawed assumptions. Additionally, interference bias where users’ interactions cause dependencies can violate A/B test assumptions and delay valid conclusions. In Shopify stores, this might occur when one customer’s experience influences another customer’s behavior or when test variations leak into unexpected places. These biases make results unreliable, forcing you to rerun tests or question conclusions you should be confident about.

Operational Infrastructure Failures

Behind the scenes, bugs in experimental infrastructure create silent killers of velocity. A tracking code that fires inconsistently means your data is incomplete. A test that fails to deploy correctly to all devices means some traffic isn’t randomized properly. These technical failures aren’t always obvious. Your test completes, data looks reasonable, but conclusions are built on a corrupted foundation. You deploy a change based on flawed data, it underperforms, and now you’re investigating why. Technical debt accumulates when infrastructure isn’t regularly audited and validated. The antidote is testing your testing infrastructure before launching customer-facing experiments. Verify tracking works. Verify randomization is consistent. Verify variations deploy to the right audience. This overhead feels slow initially but prevents cascading delays later.

Pro tip: Before deploying any test to customers, run a sanity check with internal traffic to verify tracking fires correctly, variations display as intended, and no technical issues exist; catching bugs early prevents false conclusions that waste weeks of future optimization.

Accelerate Your Shopify Store Growth with High-Velocity Testing

The challenge of maintaining A/B testing velocity is real for Shopify store owners. Running effective experiments quickly without relying on developers or complicated setups can feel overwhelming. If you want to stop waiting weeks for test results and start making data-driven decisions with confidence, then solutions that provide pre-tested variations, easy deployment, and clear actionable insights are exactly what you need. Automagic.li solves this pain point by offering a library of over 40 AI-powered, proven test variations tailored for Shopify stores. You can deploy impactful experiments fast without writing a single line of code. This means you eliminate bottlenecks, accelerate your learning curve, and grow your revenue faster.

Ready to transform your store into a high-velocity testing machine? Visit Automagic.li now to explore how our platform helps Shopify merchants deploy winning A/B tests efficiently. With AI-driven customization, instant data clarity, and no technical barriers, you can boost conversion rates and outpace competitors who are stuck in slow testing cycles. Don’t let slow experimentation hold your store back when you can start optimizing today. See how easy accelerating growth can be at Automagic.li and learn more about harnessing fast experimentation with AI-powered Shopify A/B Testing.

Frequently Asked Questions

What is A/B testing velocity?

A/B testing velocity refers to the speed and efficiency with which experiments are conducted on a Shopify store to derive actionable insights. It emphasizes frequent testing, quick data analysis, and rapid implementation of successful variations to optimize outcomes like clicks and conversions.

How does testing velocity impact store performance?

Higher testing velocity leads to measurable business outcomes by enabling faster iterations based on data-driven decisions. It compresses the feedback loop, allowing stores to identify and resolve conversion bottlenecks quickly, ultimately increasing revenue and enhancing user experience.

What are the key factors that influence A/B testing velocity?

Key factors include sample size, traffic allocation, experiment design complexity, and the use of statistical methods. Balancing these elements helps optimize testing speed, ensuring that results are reliable while also enabling quick decision-making.

What types of A/B testing methods can enhance testing velocity?

Methods that enhance testing velocity include simple A/B splits, multi-armed bandit approaches, simultaneous multiple variant testing, and sequential testing with anytime-valid inference. Each method varies in speed and efficiency, allowing stores to select the best approach based on their testing needs.

Recommended

- Why Testing Improves Sales for Shopify Stores – Automagic

- How to Increase Conversion Rate for Shopify Stores Easily – Automagic

- Increasing Revenue With A/B Tests: Proven eCommerce Gains – Automagic

- 2026 update: Top A/B Testing App for Shopify Stores: Boosting Conversion in Ecommerce – Automagic

- Nectar | Case Study -Building the Perfect Shopping Trip on Shopify